Every data science team has a graveyard of Jupyter notebooks — models that worked brilliantly in development but never made it to production. The gap between a working prototype and a reliable production system is enormous, and it's not a data science problem. It's an engineering problem. Here's how our data engineering team bridges that gap.

Why Notebooks Fail in Production

A Jupyter notebook is an incredible tool for exploration, experimentation, and storytelling. But it's fundamentally unsuited for production workloads. Notebooks don't version well, they have hidden state dependencies (cell execution order matters), they lack error handling, they can't be monitored, and they don't integrate with deployment pipelines.

More critically, notebooks often embed data loading, preprocessing, feature engineering, model training, and inference in a single linear flow. In production, each of these stages needs to be independently testable, deployable, monitorable, and scalable.

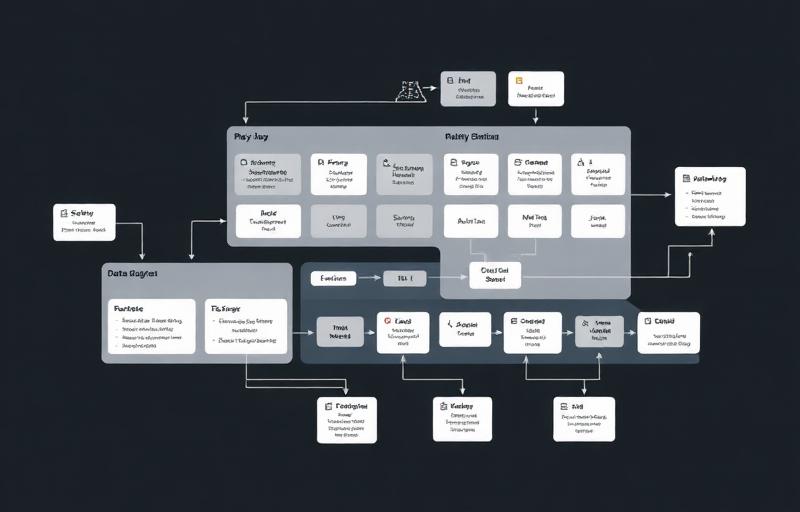

The Production ML Architecture

We decompose the notebook into a proper production architecture with distinct, independently managed components:

- Feature Store — centralized, versioned feature computation ensuring training/serving consistency

- Training Pipeline — automated, reproducible model training with experiment tracking (MLflow)

- Model Registry — versioned model artifacts with metadata, metrics, and approval workflows

- Serving Infrastructure — low-latency prediction endpoints with auto-scaling (SageMaker or custom)

- Monitoring System — tracking prediction quality, data drift, and model performance over time

Feature Engineering in Production

The most insidious production ML bug is training-serving skew: the features computed during training don't exactly match those computed at inference time. A slightly different date parsing, a missing normalization step, a different library version — any of these can silently degrade model performance without triggering any errors.

We solve this with a shared feature store that computes features once and makes them available to both training and serving pipelines. Features are versioned and tested, ensuring perfect consistency between what the model learned and what it sees in production.

Automated Retraining

Models decay. The data distribution shifts, user behavior changes, and the model's accuracy gradually degrades. We implement automated retraining pipelines that trigger either on a schedule (weekly, monthly) or when monitoring detects performance degradation beyond a threshold.

Each retraining run produces a candidate model that's evaluated against the current production model on a holdout dataset. Only if the candidate outperforms the incumbent does it get promoted. This champion/challenger pattern ensures that retraining never makes things worse.

Monitoring for Data Drift

Traditional software monitoring checks if the service is up and responding. ML monitoring must go further: is the input data distribution changing? Are prediction distributions shifting? Is the model's accuracy degrading on recent data? We implement statistical tests that compare incoming data distributions against the training data baseline and alert when significant drift is detected.

The #1 cause of silent ML failures in production is data drift — the real world changes but the model doesn't. Automated drift detection is not optional for any production ML system.

Getting Your Models to Production

If your data science team has models that deliver value in notebooks but haven't made it to production, the problem is almost certainly an engineering gap — not a data science gap. Our ML engineering team specializes in exactly this transition: taking validated models and building the production infrastructure around them. We can typically have a model serving predictions in production within 4-6 weeks, with full monitoring, retraining, and rollback capabilities.