Modern applications generate staggering volumes of data — user events, transaction records, IoT sensor readings, application logs, third-party webhook payloads. The businesses that thrive are those that can transform this firehose of raw data into actionable intelligence in real-time, not in overnight batch jobs. Here's how we architect event-driven data pipelines that process millions of records daily.

Batch vs. Stream: Choosing Your Architecture

Batch processing (run a big job once a day) is simpler and cheaper. Stream processing (process each event as it arrives) is more complex but enables real-time decision making. The right choice depends on your latency requirements.

- Batch is fine for: daily reports, monthly analytics, historical trend analysis, data warehouse loads

- Streaming is necessary for: fraud detection, real-time dashboards, dynamic pricing, live personalization, alerting

- Lambda architecture (both): when you need real-time speed AND the accuracy of batch reconciliation

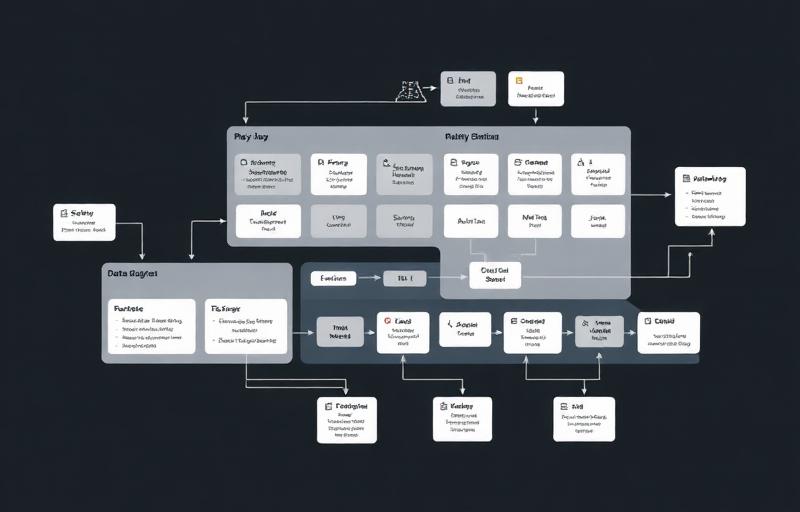

Our Streaming Architecture on AWS

Our standard real-time pipeline uses Amazon Kinesis Data Streams for event ingestion (it handles millions of records per second), AWS Lambda for lightweight event transformation and routing, Amazon Kinesis Firehose for reliable delivery to data stores, and Amazon Redshift or Snowflake as the analytical data warehouse.

For more complex transformation logic, we use Apache Flink on Amazon Managed Service for Apache Flink (formerly Kinesis Data Analytics). Flink handles windowed aggregations, complex event processing, and stateful transformations that Lambda can't efficiently manage.

The Python ETL Layer

Python is the lingua franca of data engineering, and for good reason. Libraries like pandas, SQLAlchemy, and boto3 make it trivial to read from any source, transform data with complex business logic, and load it into any destination. We structure our Python ETL code as small, testable functions — each responsible for a single transformation — orchestrated by Apache Airflow or AWS Step Functions.

Every transformation function is unit tested with sample data, integration tested against staging data sources, and monitored in production for execution time, error rate, and output row counts. When a transformation fails, Airflow retries it automatically and alerts the team if it fails repeatedly.

Data Quality and Validation

The most expensive data pipeline bug is one that silently produces wrong results. We implement data quality checks at every stage: schema validation at ingestion (reject events that don't match the expected format), referential integrity checks during transformation, statistical anomaly detection on output (alert if today's row count deviates more than 2 standard deviations from the historical average).

A data pipeline that runs without errors but produces wrong results is worse than one that fails loudly. Invest in data quality validation at every stage — it will save you from making business decisions based on bad data.

Scaling and Cost Optimization

Our pipelines process millions of records daily for clients across healthcare, fintech, e-commerce, and logistics. The key to keeping costs manageable at scale is right-sizing compute resources (Lambda for simple transformations, Flink for complex ones), using columnar storage formats (Parquet) for analytical queries, implementing data lifecycle policies (move old data to cheaper storage tiers), and caching frequently accessed results.

If your organization is sitting on valuable data that's trapped in operational databases, spreadsheets, or third-party systems, a well-architected data pipeline is the first step toward unlocking its value. We can help you design and build a pipeline that feeds your analytics, powers your ML models, and gives your team real-time visibility into what matters.